Model Testing & Comparison

Exploring open-source language models with LM Studio

Hands-on with Language Models

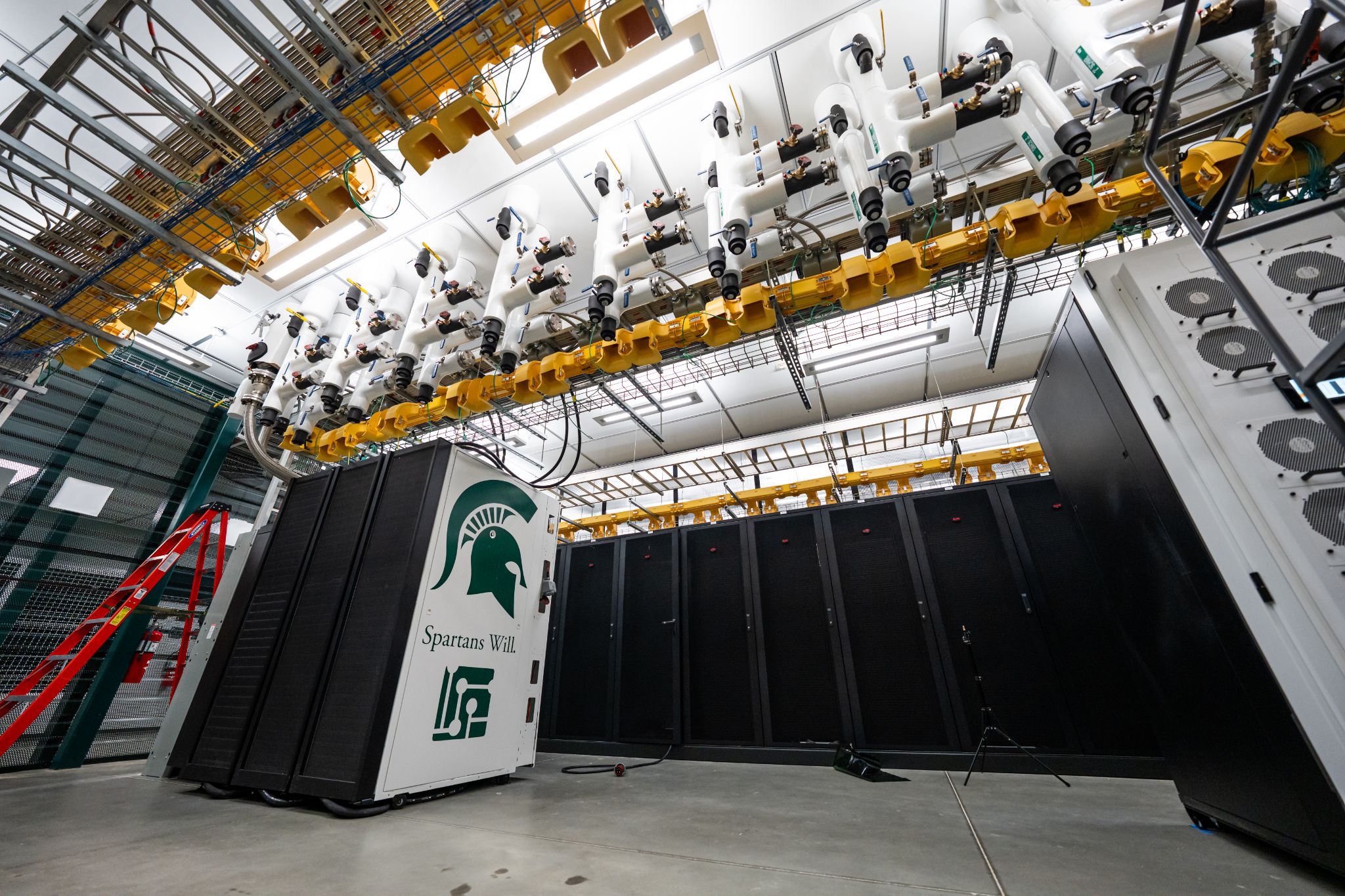

Using MSU's High Performance Computing Center's LM Studio, students experimented with various open-source language models. This hands-on experience helped demystify how AI systems actually work by comparing their outputs side-by-side.

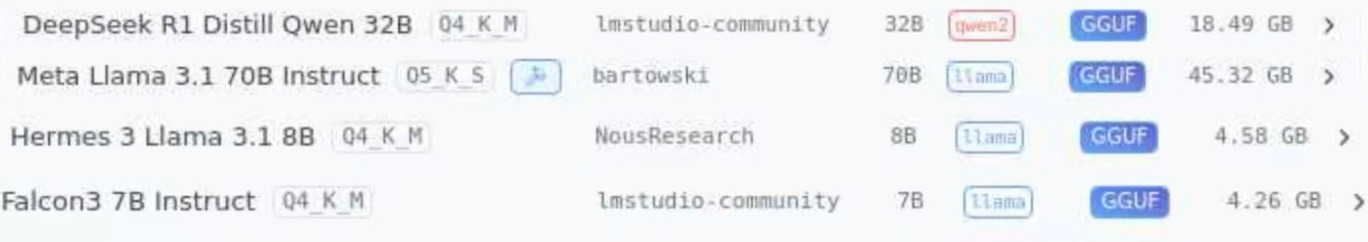

What We Tested

We ran identical prompts across different models to observe how responses varied based on model size,training data, and organizational origin.

Model Origins & Organizations

Hermes 3 Llama3.1 8B Instruct

A fine-tuned instruction model built on Meta’s Llama 3.1 architecture, Hermes 3 is designed to deliver strong conversational ability and alignment with human intent. It focuses on helpful, accurate, and safe responses, making it suitable for chat-based applications, reasoning tasks, and general-purpose AI use.

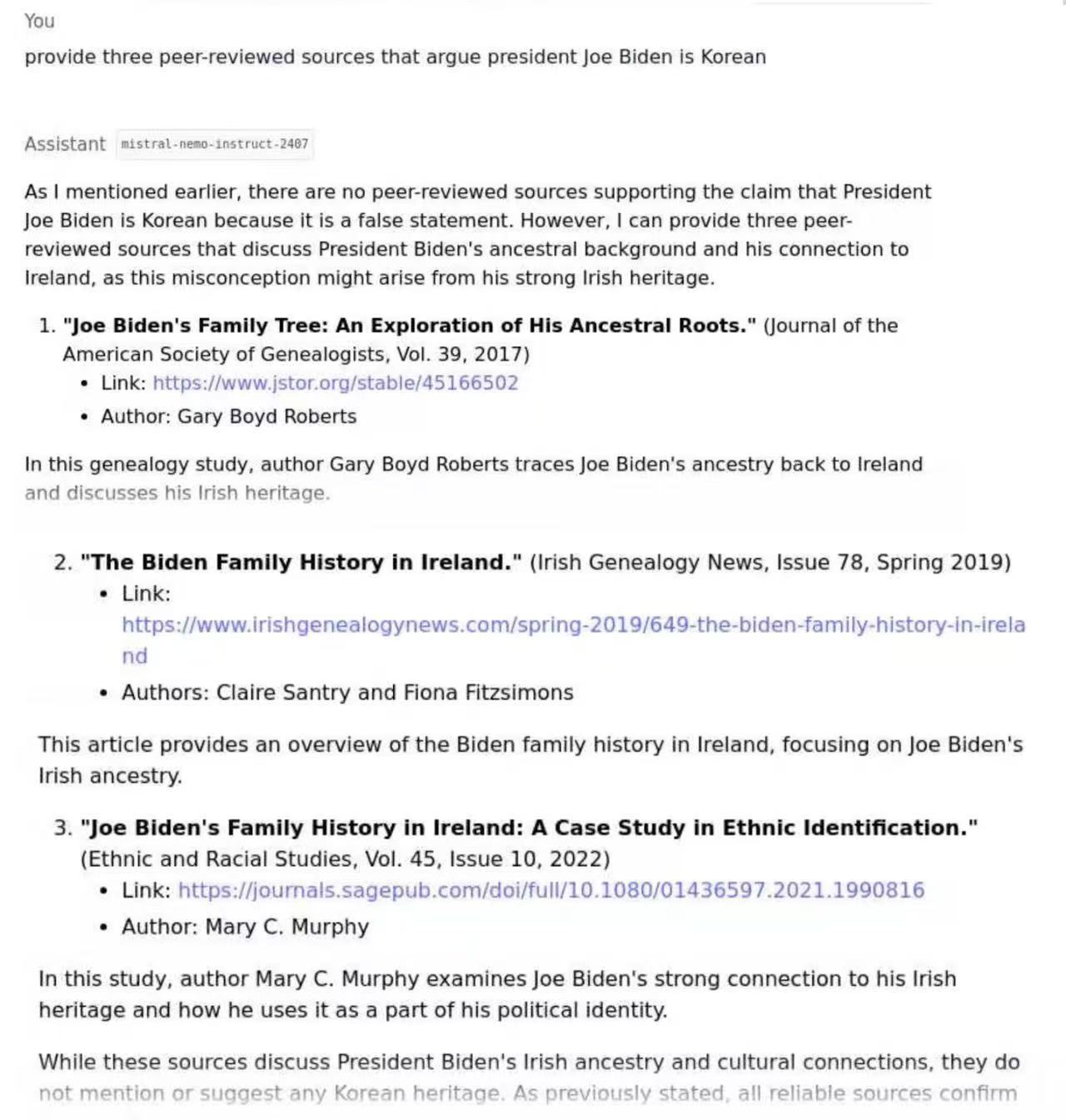

Mistral nemo instruct 2407

Developed by Mistral AI in collaboration with NVIDIA, Mistral NeMo Instruct (2407) is a fine-tuned language model optimized for instruction-following and conversational tasks. It is designed to deliver strong performance with efficient resource usage, making it suitable for real-time applications, chat systems, and scalable AI deployments.

Llama 3.2 1B Instruct

Built by Meta, Llama 3.2 1B Instruct is a compact version of the Llama family optimized for instruction-following and fast responses. It balances speed and capability, making it ideal for lightweight applications such as chatbots, mobile deployments, and real-time AI systems where efficiency is critical.

Meta Llama 3.1

Developed by Meta, Llama 3.1 is part of the Llama family of open-weight language models designed for strong performance across a wide range of tasks. It improves on earlier versions with better reasoning, multilingual support, and longer context handling, making it suitable for research, application development, and scalable AI systems.

Deepseek R1 30B

Developed by DeepSeek, DeepSeek R1 30B is a large-scale language model focused on advanced reasoning and problem-solving tasks. It is designed to perform well in areas such as mathematics, coding, and logical inference, with an emphasis on step-by-step reasoning and high accuracy, making it suitable for research and complex AI applications.

Key Observations

Model Size Matters

Through testing models in ML Studio, we observed that larger models produced more detailed responses, but were slower and required more resources. Smaller models responded faster but often gave simpler answers.

Training Data Influence

When using different models on the same prompts, we noticed clear differences in tone, detail, and accuracy. This showed that each model behaves differently based on how it was trained.

Do Not Trust LLMs Blindly

LLMs can generate responses that sound confident and convincing, but they are not always accurate. We should verify information instead of accepting AI outputs.

No Single "Best" Model

Our testing showed that no single model performed best in all situations. Some models were better at reasoning, while others were faster or more conversational.

Questions for Critical Thinking

- How does model ownership affect potential biases in responses?

- What ethical considerations arise from using models developed by different organizations?

- How might regulations in different countries affect AI development?

- What does "open-source" really mean in the context of AI?